The human brain, with its billions of interconnected neurons, serves as a remarkable model for artificial intelligence (AI) systems. Neurons communicate through electrical impulses and chemical signals, forming intricate networks that process information, learn from experience, and make decisions. This biological mechanism of learning and adaptation inspired the development of artificial neural networks (ANNs).

Neural networks in AI mimic the brain’s structure and function, comprising interconnected nodes (artificial neurons) organized in layers. Input nodes receive data, hidden layers process it through weighted connections, and output nodes generate responses. Activation functions determine node behavior, enabling learning through backpropagation, where errors are calculated and used to adjust connection weights, optimizing network performance over time.

Artificial Neural Networks Explained: Structure and Function

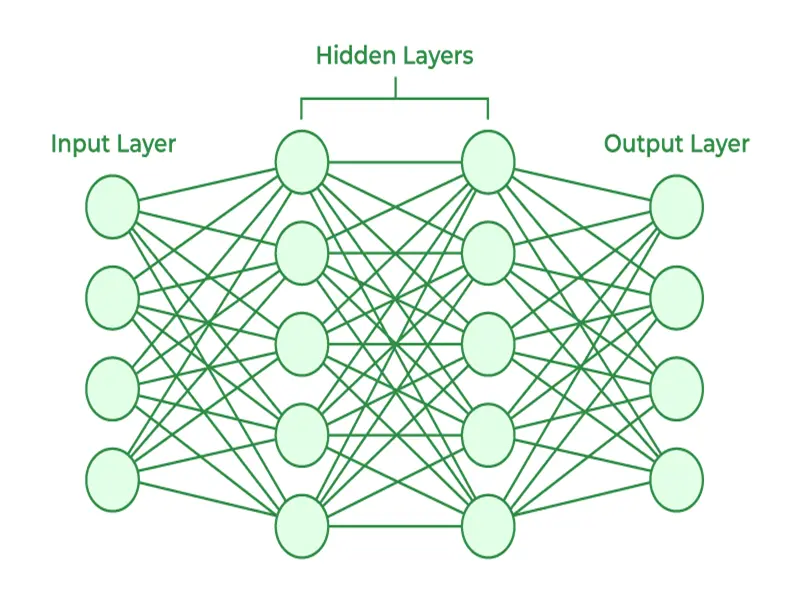

Understanding the architecture of artificial neural networks (ANNs) is crucial to grasp their capabilities and applications. ANNs consist of three main layers:

- Input Layer: Receives data inputs, such as images, text, or numerical values.

- Hidden Layers: Process data through weighted connections and activation functions. Deep neural networks have multiple hidden layers, enabling complex feature extraction and pattern recognition.

- Output Layer: Produces the network’s final predictions or classifications based on processed inputs.

Activation functions like sigmoid, ReLU (Rectified Linear Unit), and softmax determine how each node processes information, introducing non-linearities essential for capturing complex relationships in data.

Different Types of Neural Networks: Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs)

Neural networks are tailored for specific tasks, leading to various architectures designed to excel in different domains:

- Convolutional Neural Networks (CNNs): Primarily used in image recognition and computer vision tasks, CNNs leverage convolutional layers and pooling operations to extract features hierarchically. This hierarchical feature extraction enables CNNs to detect patterns at different scales and orientations, making them effective in tasks like object detection, facial recognition, and image segmentation.

- Recurrent Neural Networks (RNNs): Unlike feedforward networks like CNNs, RNNs maintain internal memory through feedback loops, enabling them to process sequential data such as time series, speech, and text. Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) architectures address the vanishing gradient problem, enabling RNNs to capture long-range dependencies and contextual information. Applications include language translation, sentiment analysis, and speech recognition.

Applications of Neural Networks: From Self-Driving Cars to Medical Diagnosis

Neural networks have revolutionized various industries, showcasing their adaptability and transformative potential:

- Autonomous Vehicles: In self-driving cars, neural networks analyze sensor data (e.g., cameras, LiDAR, radar) to detect objects, predict trajectories, and make real-time driving decisions. Deep learning models, combined with reinforcement learning algorithms, enhance vehicle navigation, safety, and efficiency.

- Healthcare: Neural networks contribute significantly to medical imaging analysis, disease diagnosis, and personalized treatment plans. CNNs analyze MRI, CT scans, and X-rays for anomaly detection, while RNNs process patient data for risk prediction and treatment optimization. AI-driven medical devices and telemedicine platforms improve healthcare accessibility and outcomes.

- Finance and Trading: Neural networks power predictive models for financial markets, analyzing historical data to forecast trends, risk factors, and investment opportunities. Algorithmic trading systems leverage deep learning algorithms for real-time decision-making, optimizing portfolio management and trading strategies.

- Natural Language Processing (NLP): NLP applications rely on neural networks for sentiment analysis, chatbots, language translation, and text generation. Transformer architectures, such as BERT (Bidirectional Encoder Representations from Transformers), achieve state-of-the-art results in language understanding tasks, enabling AI systems to comprehend and generate human-like text.

The Future of Neural Networks: Advances and Possibilities

The trajectory of neural network research points toward exciting advancements and possibilities:

- Generative Adversarial Networks (GANs): GANs enable the generation of realistic content, including images, videos, and audio, by training a generator network to produce data indistinguishable from real examples, while a discriminator network learns to differentiate real from generated data. GANs have applications in art generation, data augmentation, and deepfake detection.

- Transformers and Attention Mechanisms: Transformers revolutionize NLP tasks by leveraging attention mechanisms to focus on relevant parts of input sequences. Models like GPT (Generative Pre-trained Transformer) achieve impressive results in language generation and understanding, paving the way for AI systems with contextual awareness and nuanced communication capabilities.

- Neuromorphic Computing: Inspired by brain structures, neuromorphic computing architectures mimic neurons and synapses for energy-efficient and parallel processing. These systems show promise in edge computing, robotics, and cognitive computing applications, offering scalability and sustainability benefits compared to traditional computing architectures.

- Ethical Considerations: As AI technologies advance, addressing ethical considerations becomes paramount. Ensuring transparency, fairness, and accountability in AI decision-making processes mitigates biases and promotes responsible AI deployment. Collaborative efforts among researchers, policymakers, and industry stakeholders are essential for ethical AI development and governance.

neural networks continue to evolve and shape the landscape of artificial intelligence, driving innovation across sectors and unlocking new capabilities. Understanding their fundamental principles, diverse architectures, applications, and ethical implications is crucial for leveraging AI’s potential while navigating challenges responsibly. The future holds exciting prospects as neural network research progresses, paving the way for transformative AI-driven solutions and experiences.

As experts in the field of artificial intelligence, Mindlab is well-equipped to tackle diverse projects and provide valuable advice. With a deep understanding of neural networks and their applications, Mindlab offers comprehensive solutions tailored to clients’ needs. Whether it’s developing cutting-edge AI models, optimizing neural network architectures, or addressing ethical considerations in AI deployment, Mindlab’s expertise ensures high-quality outcomes and informed decision-making. Clients can rely on Mindlab for innovative AI solutions and expert guidance in navigating the evolving landscape of artificial intelligence.